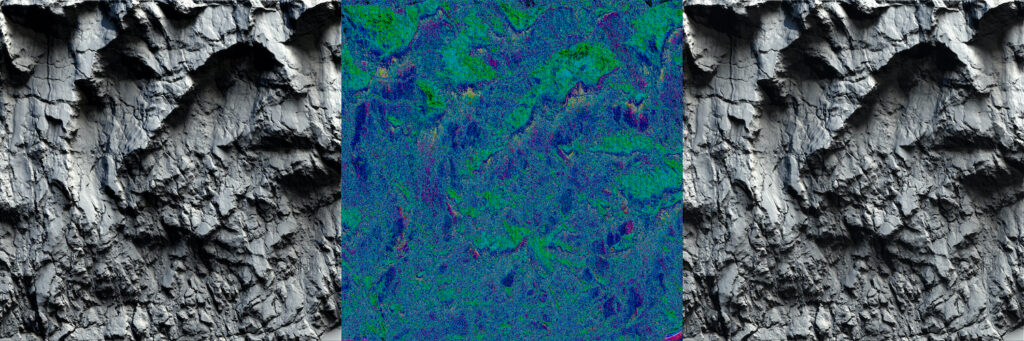

Top image: NoAI by Edit Ballai

Concept artist Edit Ballai is fighting back against the unfair use of human-made art to create AI generated artworks. She -and some industry friends- created an app that embeds an AI Watermark on original art. Thereby tricking the Internet scraping bots into thinking that the art is generated by an AI, so it won’t get fed back into the machine.

After the initial wow-factor of AI creating impressive artworks seemingly out of thin air, came the cold realization that behind the clever trickery were works of art created by humans. “In the very beginning, AI seemed like a great tool in artists’ hands to help in our work, but it quickly turned into a nightmare”, says Edit Ballai. “So I decided to take action against AI because the situation started to be overwhelming. Especially with the newest generator Unstable Diffusion and all the concerning things I’ve read online that artists end up as a line in a prompt and artists’ artworks are manually fed into the machine.”

When Ballai read this article about Stable Diffusion’s invisible watermarking process, she got the idea to do the same on her own artwork. The program is using an open python library called Invisible Watermark that’s invisible to the human eye. The purpose of this watermark is most likely for filtering out AI-generated images in the future to avoid them being used in training new AI models. “I reached out to a friend of mine, to ask if he can help me with writing a script that would do the exact same thing as Stable Diffusion does”, she says.

Safe to use

“We started working on it in December. The python script was working great but the instructions to run it from the terminal seemed to be complicated for regular people. So, I asked another friend to create a standalone application from the script. We published both versions on GitHub. First we posted only the application, but then we also posted the python script with instructions because people needed some reassurance that it’s safe to use.”

Although it’s most likely a temporary solution -AI developers will adjust to the situation and try new methods- Ballai is determined to keep the fight going. “I already started to work on a new idea that will create another layer of protection, but I’m still in the brainstorming & researching stage with it. I’m in contact with other people too, who also have some interesting projects in development. So it seems that our watermark generator is only the first step out of many.”

AI is here to stay

The call for worldwide legislation to protect art against unfair use by AI programs is getting louder. “We know AI is here to stay one way or another but there are more ethical ways that these models can co-exist with visual artists”, says Ballai. Below are her recommendations.

- Ensure that all AI/ML models that specialize in visual works, audio works, film works, likenesses, etc. utilize public domain content or legally purchased photo stock sets. This could potentially mean current companies shift, even destroy their current models, to the public domain.

- Urgently remove all artists’ work from data sets and latent spaces, via algorithmic disgorgement. Immediately shift plans to public domain models, so Opt-in becomes the standard.

- Opt-in programs for artists to offer payment (upfront sums and royalties) every time an artist’s work is utilized for a generation, including training data, deep learning, the final image, final product, etc.

- AI companies offer true removal of their data within AI/ML models just in case licensing contracts are breached.

“I don’t believe AI will completely take over the artists’ work, but there are going to be some changes in the industry”, concludes Ballai. “The way I see it, around 40% of the current jobs will disappear. This technology is powerful and could be a great tool with the right intentions. But currently, it needs strict regulations to ensure it doesn’t violate copyright laws. Probably it will also encourage us to learn new skills to secure our position in the industry.”

The Concept Art Association is looking for funding for their plan to protect artists from AI technologies. Check here.