The team at Abstraction had the wonderful opportunity to assist in the development of the Mass Effect Legendary Edition. Their team collaborated closely with Bioware over some time and they were eager to share some insight on some of the few larger graphical features, they worked on.

Depth of Field

If you have ever played Mass Effect you will certainly know the huge amount of storytelling and interactive cutscenes involved in all of the titles. Depth of Field (DOF) plays an important factor in both cutscenes and conversations. It draws attention to the important elements in the shot and creates a more cinematic atmosphere for the whole experience.

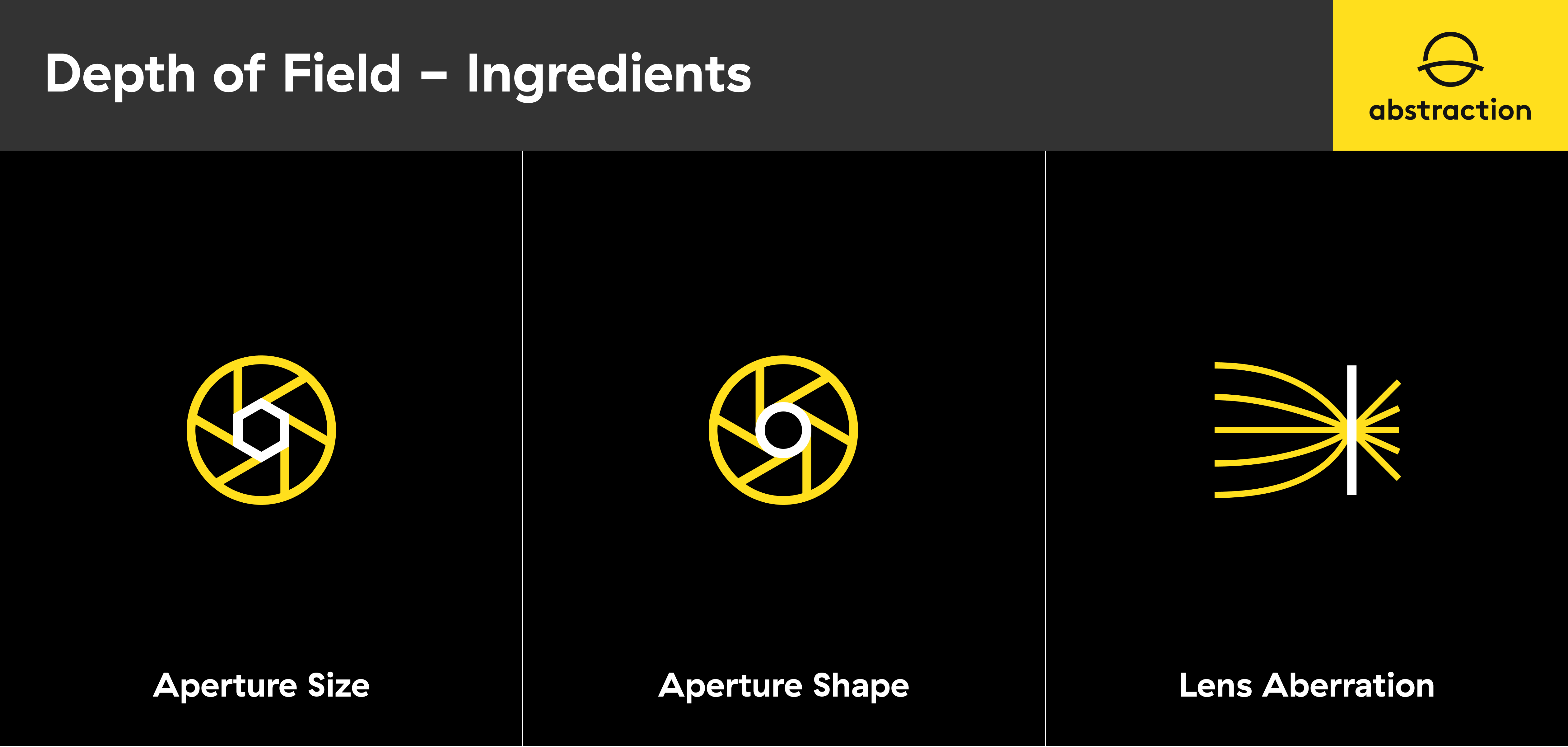

Abstraction’s work on this feature started out with further optimizations and improvements to the hexagonal Bokeh Depth of Field approach that was added across the titles. Bokeh is generally defined in the way the lens renders out-of-focus points of light, this is often a certain visual shape. This visual shape is defined by a combination of aperture size, shape, and lens aberrations. In order to emphasize the sci-fi nature of the titles, they chose to go with a (slightly tilted) hexagonal shape.

In order to optimize the single-pass hexagon sampling, Abstraction introduced a new two-pass hexagonal shape reconstruction method, which reduced the number of samples required significantly. This, together with further improvements and tweaks made down the line, allowed them to reduce the cost per frame by about half.

The reduced cost also gave Abstraction some leeway to further improve the quality and sharpness of the DOF bokeh shapes. At maximum DOF this already looked great; however, at intermediate DOF strengths, the bokeh shapes still appeared washed. This was caused by the fact that the DOF results were blended with the sharp scene based on the strength of the DOF. Instead of blending, they worked on scaling the bokeh shapes based on how strong the DOF should appear. For weaker DOF this resulted in smaller DOF shapes, making the effect appear less strong but much sharper. Overall these improvements gave them a crisper and more pronounced DOF look.

There was still a big range of issues related to DOF being out of focus for a large set of cutscenes across the three Mass Effect titles. After investigating these issues Abstraction figured out that the original introduction of the hexagonal DOF switched to using a new f-stop based formula, in favor of the original formula that was based on exponential falloff. It was vital to keep the original cutscenes ranges intact so that minimal content changes were needed. A solution requiring a full pass on all cutscenes was not an option since it would take a huge amount of effort for a title with as many cutscenes as Mass Effect. In the end, they added back support for the original exponential falloff approach, while maintaining support for the f-stop based formula as that was often used during gameplay situations.

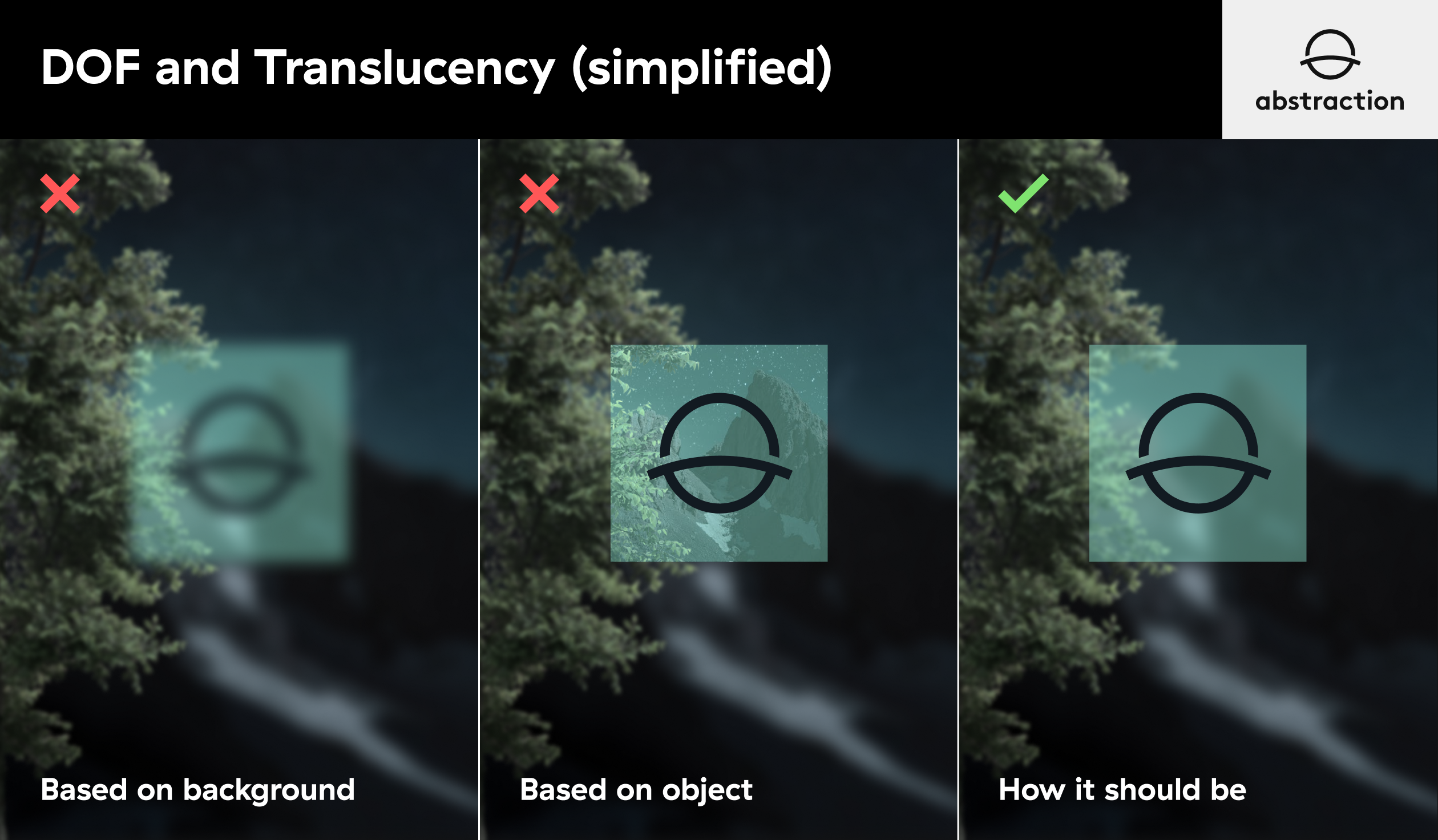

Mass Effect contains a lot of holograms and other translucent objects that appear during cutscenes. Depth of Field is often calculated based on the depth of opaque objects in the scene. This can cause translucent objects to appear out of focus even when you expect them to be sharp. But you can’t use the translucent object depth either because then the background behind the translucent object might appear sharp when you expect it to be out of focus. Abstraction added functionality that allowed them to combine translucent object depths but factor in how transparent they appear at that point. While this was only a rough approximation, it did resolve a lot of the visually jarring issues these transparent objects caused.

Improved HBAO

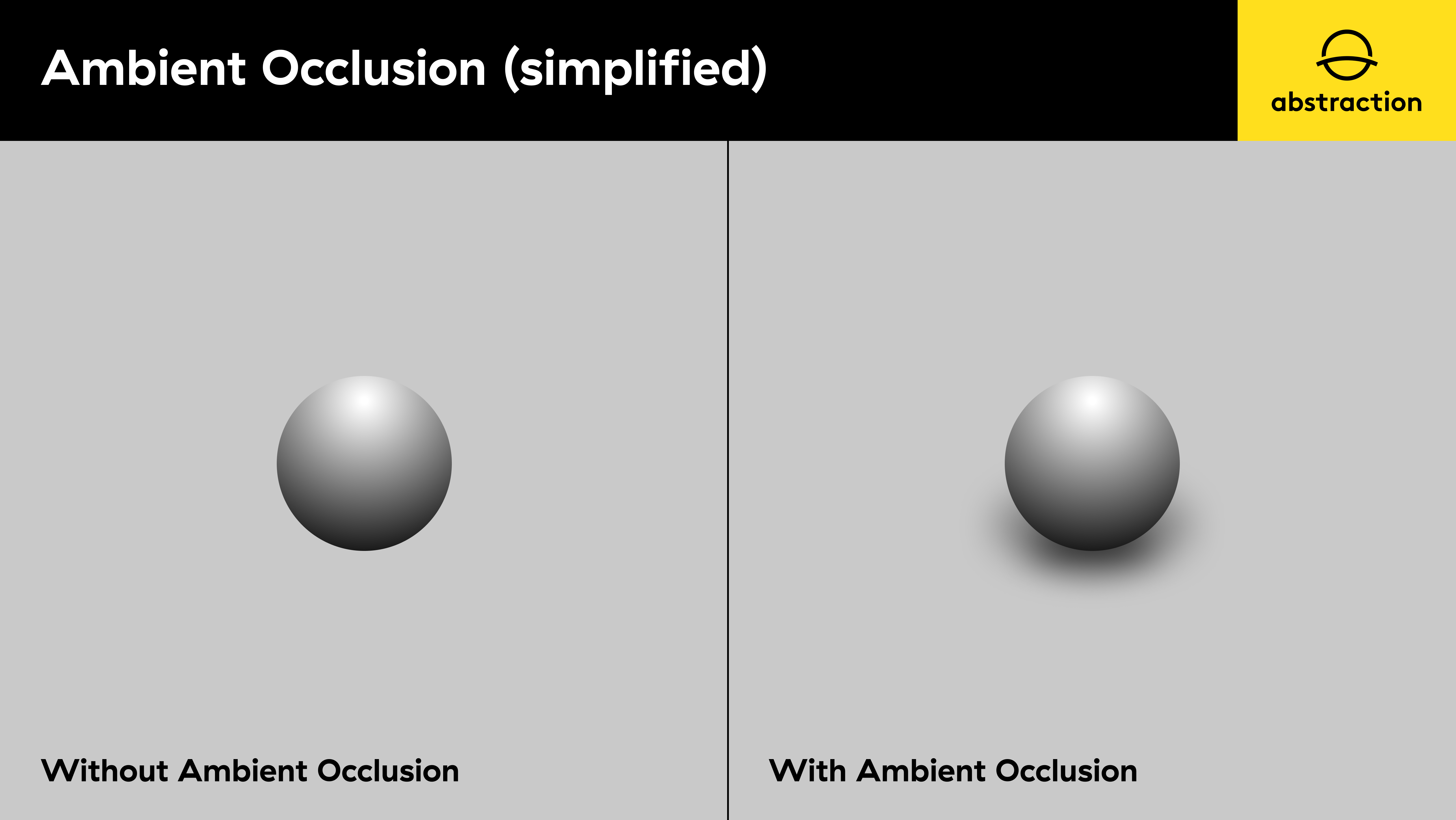

NVIDIA HBAO+ is an ambient occlusion (SSAO) algorithm that provides realistic shading around objects and surfaces that occlude light, with more precision than a lot of previous ambient occlusion techniques. However, the default NVIDIA implementation of this was causing white artifacts around characters. So Abstraction integrated an enhanced HBAO+ variation with additional calculations that gave them improved details on geometry, while removing artifacts coming from distant surfaces.

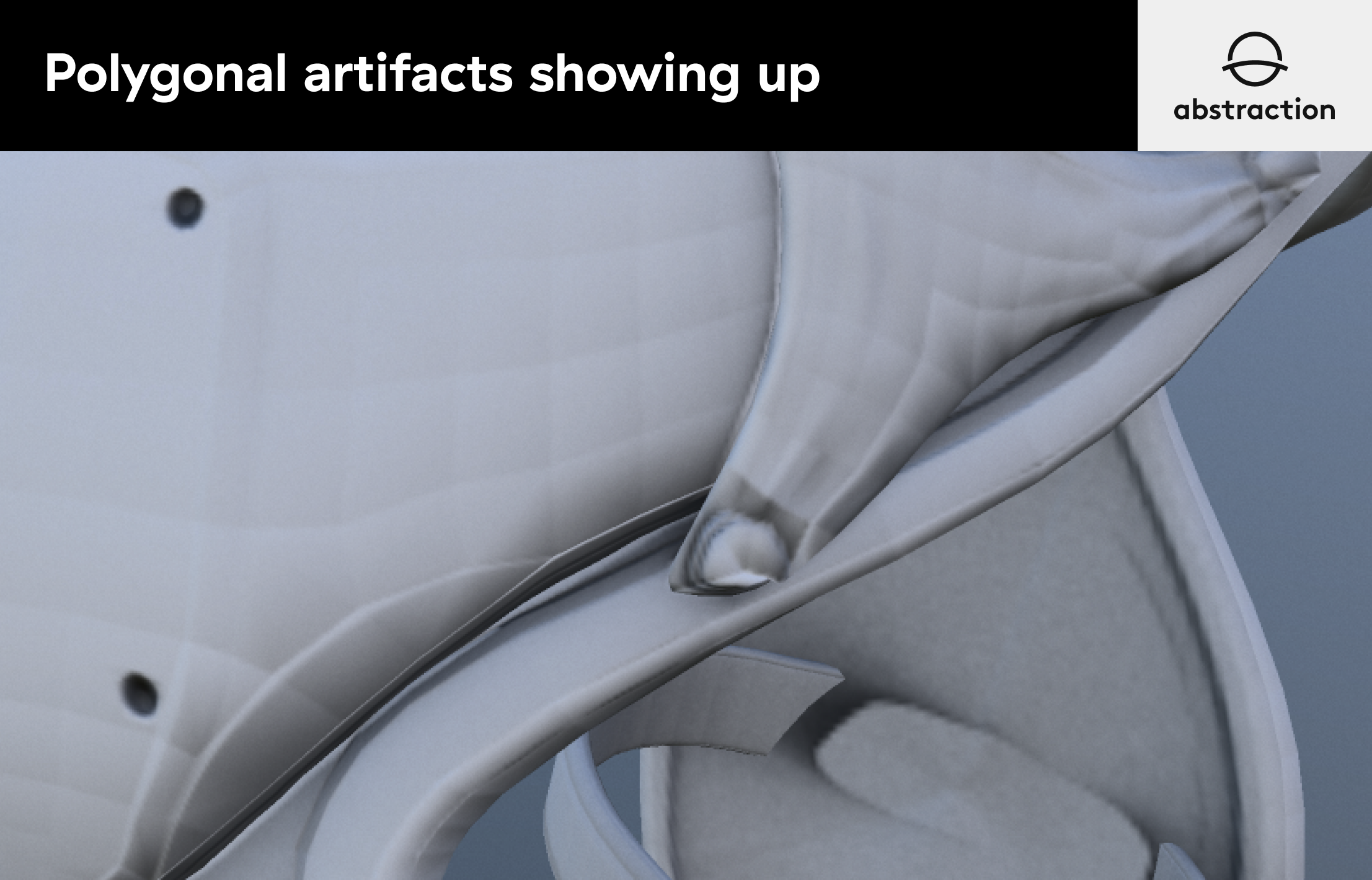

Mass Effect Legendary Edition, introduced HBAO+ to all 3 games to improve the overall quality of screen space ambient occlusion. Verbatim HBAO+ provides great results in combination with normal reconstruction, that is: obtaining per-pixel surface normals from the Z-Buffer. However, Abstraction soon noticed this didn’t work well for characters’ close-up shots, as faces showed polygonal artifacts revealing their underlying geometry.

The usual procedure to sort out this problem is to adjust AO bias until surfaces with similar angles are kept unoccluded while keeping natural shadows produced at sharp angles and corners. But ME Legendary Edition contained a wide variety of scenery, cutscenes, and characters, so using a relatively high bias to keep artifacts checked was washing out details too much.

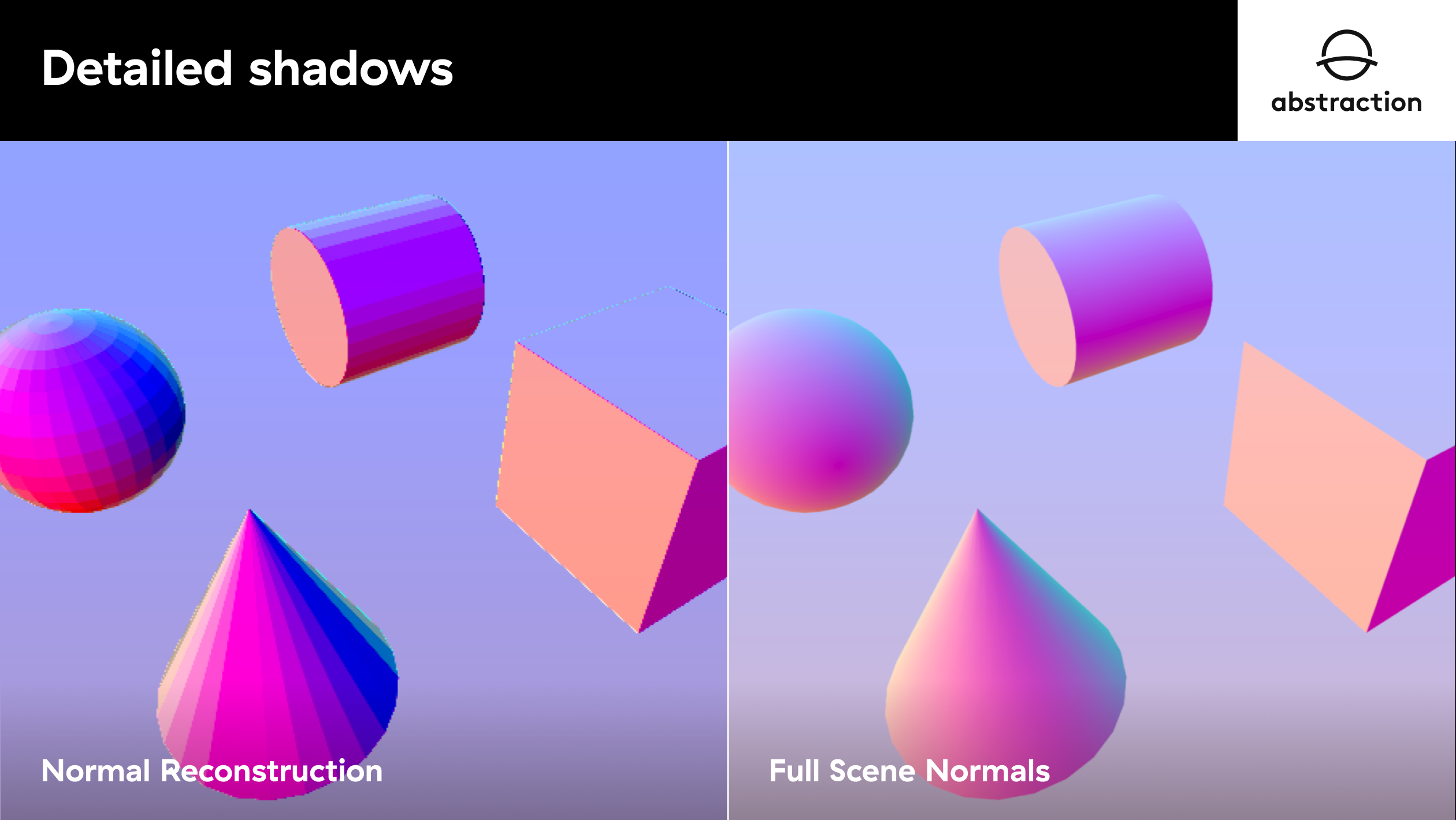

In order to bring back detailed shadows in characters and environments, Z-Buffer normal reconstruction was replaced with full scene normals which contained high quality normal maps that not only preserved small details but also softened hard edges on character’s faces and curved geometry.

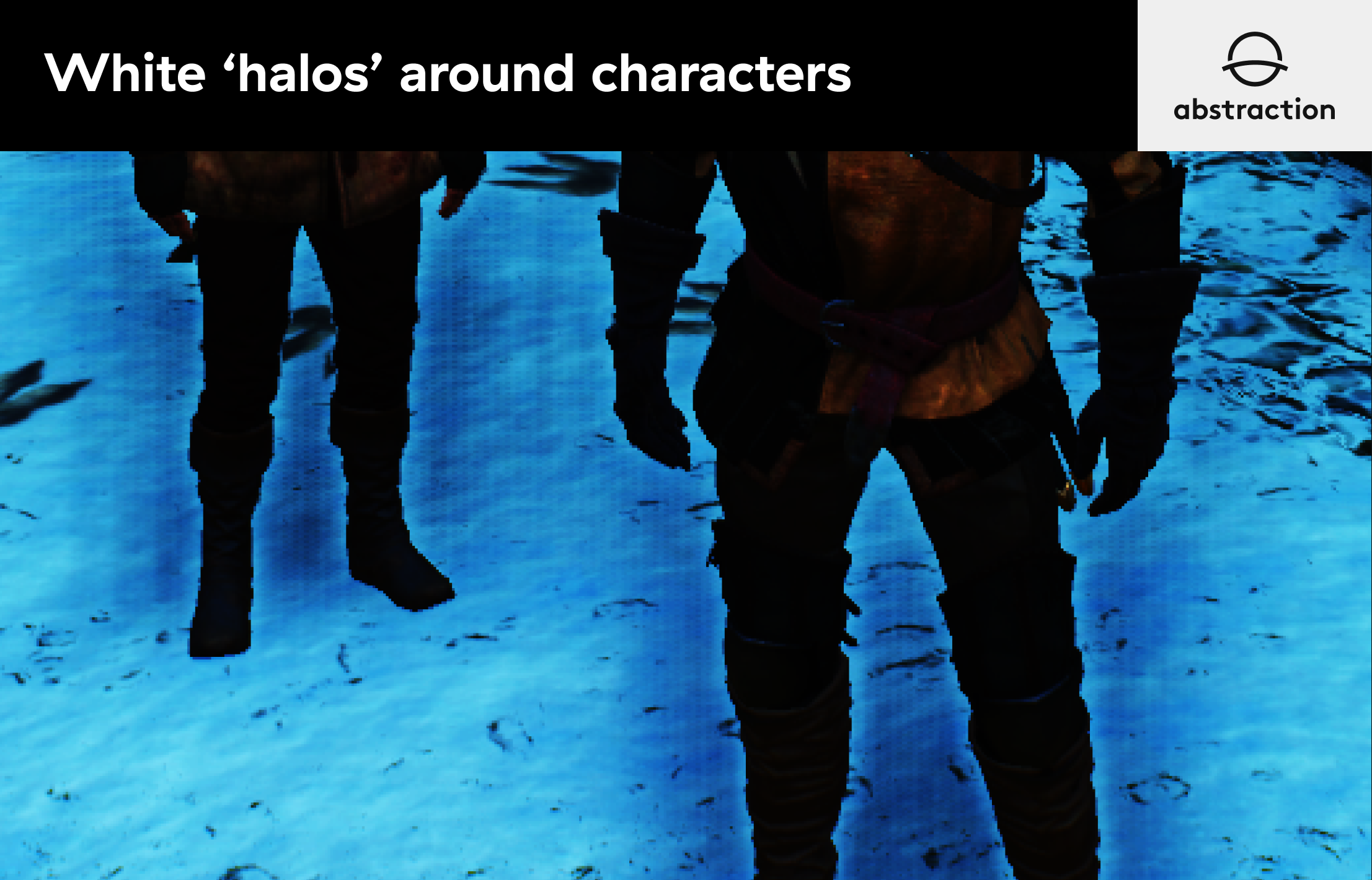

The overall look was greatly improved but Abstraction soon realized a new kind of artifact, a ‘white halo’, started popping up around characters.

The original HBAO+ implementation addresses this problem by creating multiple depth layers to keep foreground and background objects apart but such a solution required not only additional tweaking, but also increases render times.

Instead, Abstraction integrated a different occlusion formula that uses tangent computations to filter out distant objects and flat surfaces. This new computation greatly reduced artifacts, required little to none tweaking from artists, and kept detailed shadows in place which was a key element technical artists were looking for.

Translucency Sorting and Hair Rendering

All of the individual Mass Effect titles had different render paths, especially for Hair/Translucency primitives. Our approach was to unify these render paths in such a way that they wouldn’t require content changes, while at the same time improving lighting and blending of Hair, volumetric VFX, and translucent geometry.

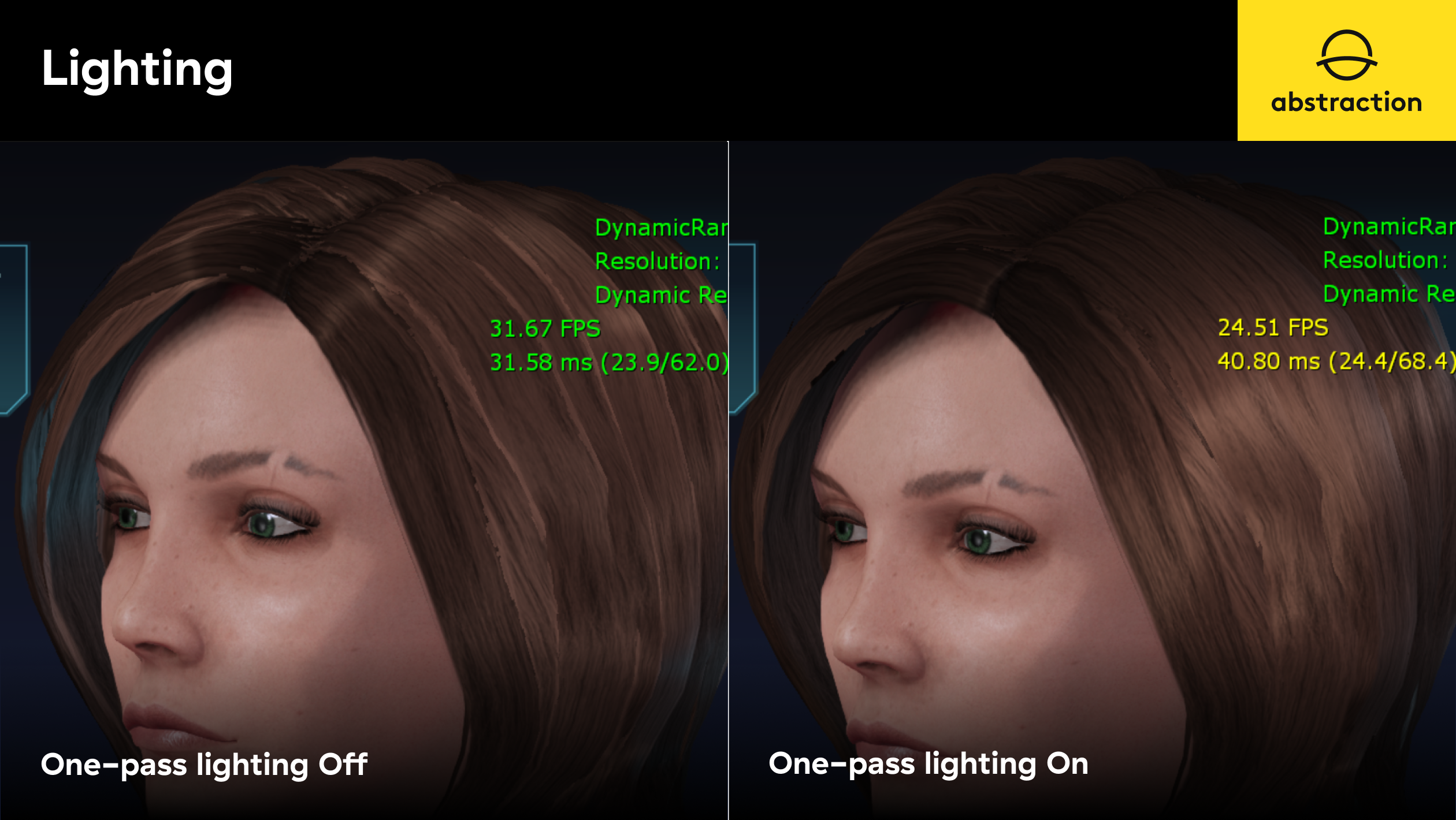

One of the features of Mass Effect Legendary Edition was the introduction of high-quality hair assets. These new assets required precise lighting and ordering of hair strands. Original games did not have a hair rendering pipeline to accommodate these new requirements. A technique called ‘One-Pass Lighting’ was introduced which consists of gathering direct lighting environment in real-time and baking it into a single spherical harmonic light that can render the entirety of the hair assets in a single pass.

Initially, this technique was introduced on ME3 and 2, but ME1 was lagging behind since it had a different rendering path for translucent objects.

In addition to lighting, artists on ME1 were having problems adjusting priorities on translucent primitives like glass windows, water, or volumetric VFX. Abstraction soon learned that ME1 content was made using a different set of parameters that controlled translucent ordering.

Abstraction upgraded and unified the rendering path on all 3 games, integrated one pass lighting to ME1, and changed translucent rendering to make it work with both existing and new content.

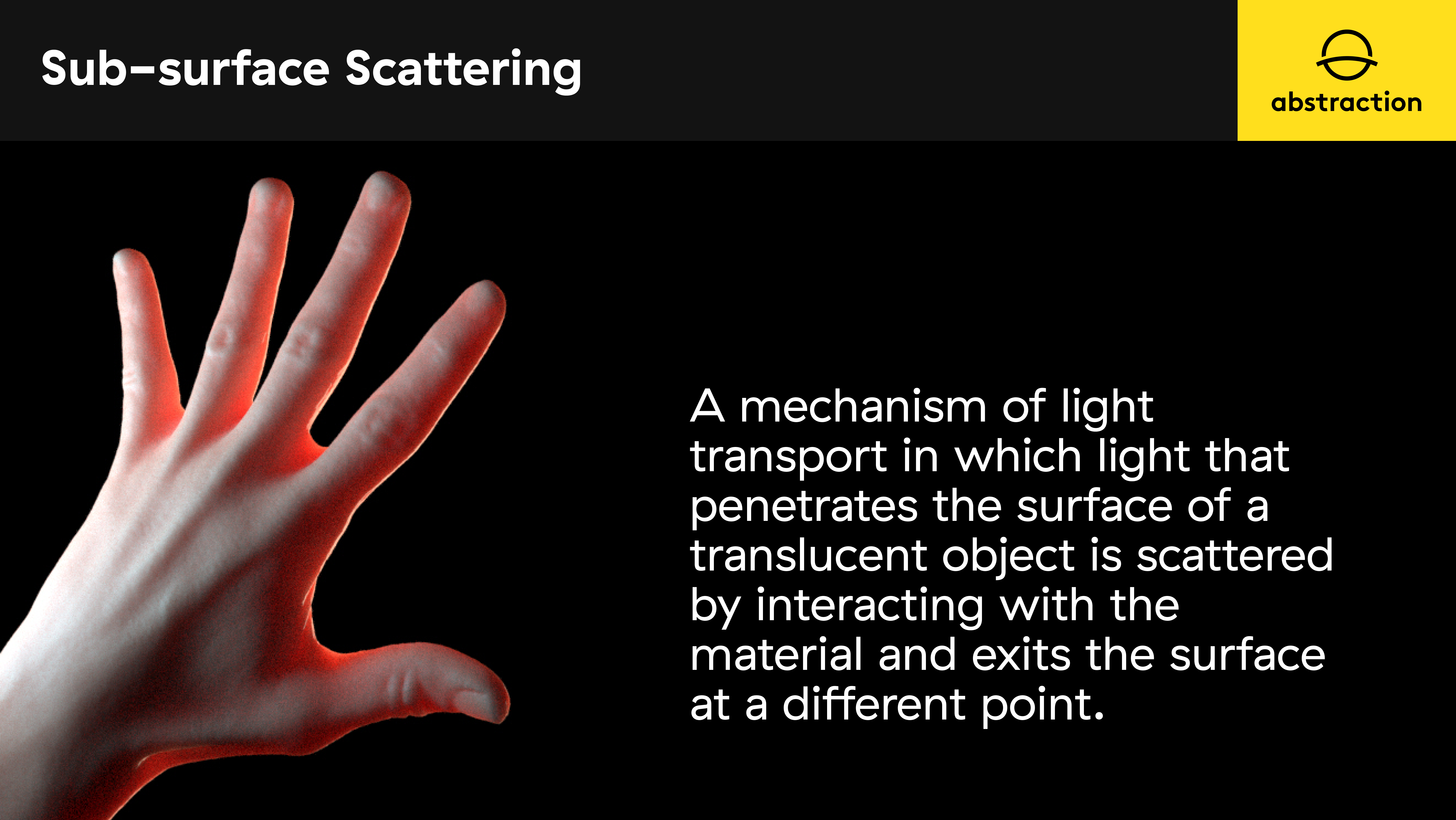

Character Skin, Sub-surface scattering

The UE3 version used for the project did not support SSS and upgrading to a newer engine version was not possible.

Abstraction evaluated many common approaches like texture space lighting, but they were almost impossible to implement without major changes to the rendering pipeline and materials.

They finally implemented a modified per-channel wrap lighting approach; it was simple, fast (added only a few instructions to the main shader), and only required one new material parameter which determined the wrap coefficient for each color channel, creating the illusion of subsurface scattering by just tweaking the coefficient for “blood-color” channels.

This technique allowed Abstraction to support approximate subsurface scattering without any noticeable performance impact or changes to the engine.

About Abstraction

Abstraction is one of the leading adaptation and co-development studios operating in the video game industry today. The Netherlands HQ is home to 50+ Abstraction people but they also work with contributors and collaborators from all over the world. They surround themselves with incredibly talented people who naturally create a culture of discovery and innovation. Abstraction has a dedicated team working on innovative in-house technology based on the latest state of the art in graphics rendering and advanced audio generation. They are already at the frontier of gaming technology and committed to continued innovation and adapting this into their on-going work. After spending many years creating and perfecting the in-house cross-platform technology “Silverware”, they have their eyes on the latest in Ray Tracing technology, powered by Microsoft and NVIDIA, with an aim to seeing this tech become mainstream.